Over the last two years, I’ve been working with an established American financial company on building a direct-to-consumer digital experience for life insurance in 30 minutes or less — like if Dominos was in the business of protecting families on the worst day of their lives.

Structured like its own FinTech startup inside of a larger enterprise, it was a blank canvas now painted edge-to-edge by a passionate team who hustled through an MVP in less than 12 months and has now reached its one year anniversary as of March 2022 — now protecting thousands of families across America.

During these same two years, I have also been building up my own startup at Soundbite.AI, a platform for sharing short-form content in the enterprise, but the bills had to be paid somehow and finance is — as they say — where all the money is.

The new system required both classical principles from when I started college two decades ago and extending on the foundation of micro-services knowledge first built near the tail end of five years at LiveTiles (and captured in my Medium article on the evolution of architectural approaches at LiveTiles). It required me to fan out my efforts, work concurrently with my cross-disciplinary team, and attempt to hyper-scale up my skills without losing observability on what’s most important — our customer.

How do you wrangle over 150 micro-services on the back of a team of fewer than 15 engineers? How do you move fast without breaking things? How do you tie together the many tools of Amazon Web Services to go big from day one?

Meet Sophia

Before talking about what the system does, let me first touch upon the product management mindset that guided the need for zero-stress term life insurance with instant underwriting and support in your pocket.

Meet Sophia…

She is in her 30s, is an educated professional, and a young mother. She wants to make sure that should the unexpected happen, her family only needs to worry about drying their eyes and not draining their bank account. She has never had life insurance before, but she has grown up in a world where you can get anything from your couch using a device in the palm of your hand.

In the year 2020, what were her options for financial freedom?

There were a variety of FinTech startups that offered mobile-app-based financial tools; however, they were (and still are) just replacing a sales agent in most cases rather than reimagining financial services from the inside out. This meant there would always be gaps in both the experience for her as a customer and a disadvantage for the provider to be locked out of the long-term evolution of the relationship.

Further, there is an inevitable trend for everything to go instant and online. Any company — financial or not — failing to digitize by the end of the decade is going to be left behind.

The North Star outcome for the platform could be summed up for me as: One day our product could be promoted by a tear-jerker Super Bowl ad about what’s really important in life. It could then issue a million term life policies in one afternoon — each applicant waiting longer for their take-and-bake pizza to cook than for their family of football fans to secure their financial freedom.

How? The answer for our parent company was to start a special project inside of the established company that would incubate a world-class consumer-grade experience, built with massive scale in mind. It would be done by a team merging some of the existing employees who want to upskill into the cloud with startup veterans (such as myself) who are already experts in cloud best practices and have an infectiously agile mindset.

Creating a platform intended for mass markets on day one imposed new challenges, but along the way, I leveled up to not only understand a new cloud platform in Amazon Web Services (or AWS — having come from over a decade in Microsoft Azure) but also what it takes for a distributed system to deliver.

Born in the Cloud

Informed by his experience building Microsoft Bing and more recently his own startup around big data, my hiring manager — the VP in charge of transforming this ragtag outfit into the next generation of technology for the company — set about defining the qualities of a “large-scale distributed system”.

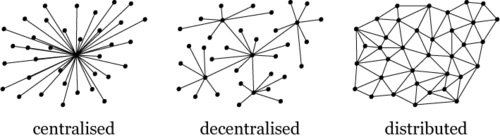

In so many ways, I realized that while I had worked with “serverless” technologies before, enterprise SaaS is most often a “decentralized” system where one can just route amongst smaller monoliths per customer organization to provide scale via horizontal partitioning. Direct-to-consumer apps with complex workflows need to be truly distributed in that pieces communicate in an interconnected and on-demand ecosystem.

In our case, I would say it’s defined by at least three qualities:

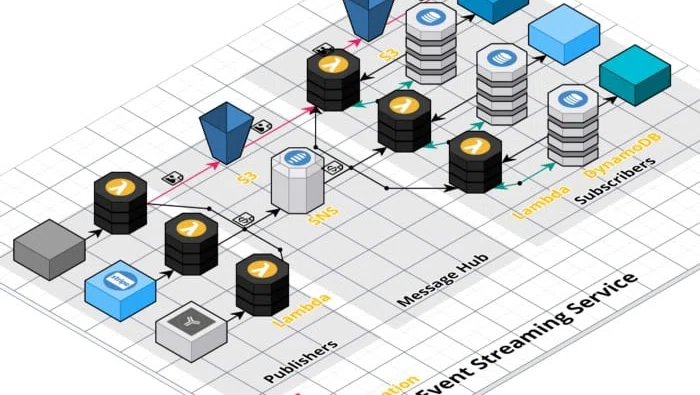

- Micro-Services — Each unit of work would be in its own Typescript & Node Lambda function, processing synchronously through API Gateway or asynchronously via event-driven patterns (see below). This solves both a scaling issue in eliminating all bundling of computing around monoliths and a social coordination issue in that each endpoint becomes its own project which can have its own team underpinning it. As of this writing, we have over 150 Lambdas in our system and it just keeps growing.

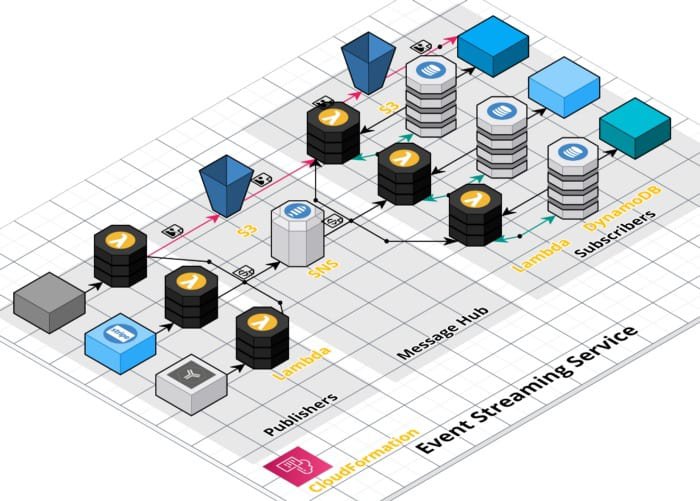

- Event-Driven — Processing happens often asynchronously and usually concurrently. This approach is enabled by architectural patterns like queueing (SQS) and fan out events (SNS), along with integrating third-party systems via webhooks. This meant that the micro-services became loosely coupled and interchangeable, with a key concept like an event stream making a spine for the data moving through the system and each process utilizing it like just another little rib.

- Best-of-Breed — In the perennial spirit of established businesses preferring “Buy” over “Build”, we selected the best-in-class third-party system for every possible domain to limit our investment in custom code. From well-known third-parties (like payments in Stripe) to industry niche systems (like accelerated underwriting in MunichRe), we ultimately chose 16 supporting cloud service providers (not counting AWS first-party) to underpin our platform and focus our effort on differentiated features rather than reinventing admin interfaces or other problems that have already been solved. This meant the main organs of the system could specialize while our micro-services and events simply glued them together into a coherent organism.

My Role

Joining the team after they had only a few months of prototypes under their belt, I was a senior individual contributor responsible for starting to coalesce a “production-ready” set of features. This included raising the bar on Typescript code quality, determining a way to share code across the poly-repo structure of the services (lots of custom npm packages), and defining some of the architectural approaches around key system concepts.

Early decisions included the approach to making an “event steam” for events processing from application through underwriting issues and even (one day) claims. After comparing Managed Kafka Services (MKS), Simple Notification Service (SNS), and Kinesis, the simplest solution (with notably the largest pool of likely experience) of SNS emerged victorious.

Wiring it up required a combination of establishing patterns for our micro-services, translating from third-party webhooks, and even how to poll into more legacy systems and then distribute analogous events across SNS topics.

My early CloudCraft diagram to visualize my event stream architecture — which includes a vanilla SNS implementation for small messages, plugs an SNS+S3 pattern for larger ones

Furthermore, I was right at home building up data structures inside of NoSQL data stores. This included overcoming the “NoSQL does NOT mean No Relationships” challenges with laying in hierarchical key approaches to bundling rows together into join-like structures. I came to revere Rich Houlihan like so many generations of DynamoDB developers before me and can now make a Global Secondary Index (GSI) with the best of them.

Finally, I authored dozens of the micro-services, demonstrating some best practices in Typescript that were brand new to some members of the team. There was a mix of backgrounds from Java folks who were badly missing dependency injection in the simplified world of Node (me too, my friend, me too) to folks who were outright against OOP as a concept since it violated the perfection of JavaScript (oddly enough, those folks didn’t stick around).

As someone who had worked in more environments than most— from growing that startup mindset at LiveTiles to truly understanding the stodgy bare metal world at the Department of Energy — I also developed a knack for designing the more complex back-end processes that provided ETL pipelines to legacy systems. This ultimately grew into the approach for transporting the transactional data from our NoSQL (DynamoDB) systems into the Snowflake-powered Data Warehouse where the future of analytics and integration with legacy systems will live. And when one says “Legacy” in a 60-year-old financial company, they mean it.

Some of the ETL processes even terminate in mainframes, in some cases replacing mainframes that do the work today for financial products underwritten and issued before I was even born.

Shockingly enough, I had a few occasions where I would receive e-mails of COBOL code illustrating subtle nuances of existing systems then painstakingly use cheat sheets and references on the internet to translate them into Typescript for use in our new implementation when it needed to mirror them.

From the most peculiar to the most rewarding, role-modeling and mentoring as a senior contributor is still my favorite activity as someone who is much more extroverted than the average coder. Time spent screen-sharing and pair programming, plus design reviews and retrospectives with some great people doing great work was a blast. As time went on I became a “pod lead” who was leading technical implementation for a cross-functional five-man band while collaborating as one of three folks setting architectural direction.

My Learning Path

I did arrive in the role with some gaps and trepidations I had to overcome along the way. Learning is a skill and re-learning how to learn is a constant, so yet another job with yet another buffet of technology was an exciting challenge.

First and foremost, while I had paid for life insurance in the past, I had very little understanding of its industry. Years marinating in the process, I still can’t tell you the difference between whole and universal life, but when it comes to term insurance, the lifecycle of a policy, retaining a customer, and making sure the agent gets paid — I’m your man.

Getting a grasp on Amazon Web Services required starting with analogies between Microsoft Azure and AWS, but soon had to grow through to full understanding. This meant not only consulting the key first-party documentation (Docs.Aws.Amazon.Com must have been my number one website for 2020) but also identifying third-party guidance that made for structured learning. Courses on ACloudGuru (now owned by Pluralsight) provided an overall framework, while community-driven resources like Medium and Stack Overflow offered more targeted experience and solutions.

This learning journey for AWS culminated in November of 2021 when I was finally able to attend AWS Re:Invent after they skipped a year due to the pandemic. I spent an entire week alongside my team learning not just the present, but also the future of Amazon’s cloud.

Amongst the skills gaps that were overcome with my learning path, Infrastructure as Code (IaC) was likely the newest frontier. The best practice of making all configuration into code rapidly becomes the only way to manage the herd of resources — remember cows, not cats !— and I feel prepared to keep declaring my intentions in my architecture rather than weaving another brittle scripting approach like in the more primitive days of early Azure Resource Manager (ARM) templates employed at LiveTiles.

Finally, the broader patterns of event-driven distributed systems were tested like never before. I picked through books on designing distributed systems (falling back to the O’Reilly books on the subject if only for more beautiful nature illustrations) much as I had picked through Martin Fowler’s work on enterprise architecture. We spent a lot of time writing and reviewing designs, guided primarily by the experience of our hiring manager, who was like the CTO of our nascent little FinTech startup inside the great big enterprise.

To this day, I still struggle with the nuances and trade-offs of distributed transactions, especially at high velocity and volume. There are many times where the simplicity of an atomic transaction is missed, yet there are other times where sacrificing data quality by allowing an incomplete process to “give me what you have, don’t worry about what I need” is a happy compromise because it means the customers never have to wait for processing and there can be resilience even without completeness.

The best practices for overcoming SRE/DevOps challenges of distributed systems is also still an art not a science. We’ve been generalists writing features and supporting the platform for the last year, but touching production (especially in regulated environments) still feels like disarming a bomb sometimes. I look forward to meeting and learning from a true guru one day.

The Distributed Future

Distributed systems were a topic twenty years ago when I first started my computer science degree at Washington State University. Back in the days of CORBA and C++ apps I never really got a chance to dive in, but in the year 2022, it’s becoming the reference architecture for every B2C app.

The platform our team has built is now protecting thousands of families across America. Near the end of 2021, we were recognized by Gartner with an Eye on Innovation Aware for Financial Services. In my eyes, that even trumps the Forrester award from my last year at LiveTiles since it arrived on the second year of the product — what an accomplishment in so short a time.

The skills gathered with the rapid delivery of a next-generation financial platform means I’ve likely worked across every major paradigm and business model of programming now. From boxed software and bare metal web 2.0 in the 00s, to decentralized SaaS in the 10s, to fully distributed cloud in the 20s. One can only imagine where the world might be in ten more years.

I certainly know technology will still be helping people like Sophia — and millions more like her — in the decades to come.