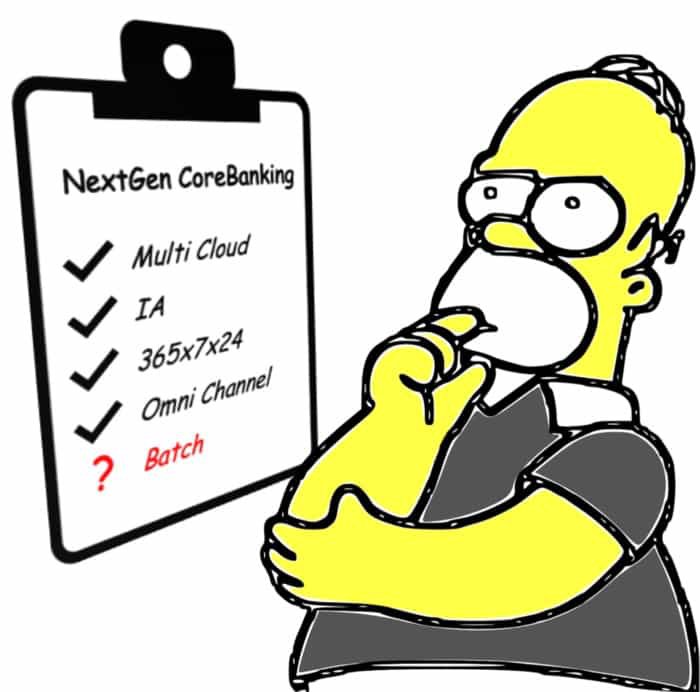

Transforming their Core Banking Systems is in the agenda of every mayor bank in the world, with most of these institutions analyzing, planning on in early stage of execution of transformation programs that will replace existing applications with the next generation of core banking systems. All of them are facing the serious challenge of the batch processing.

The batch processes represent a very high percentage of the lines of code of the legacy systems to be replaced. They support the business functions that are not resolved in real time via transaction processing. When you withdraw money in an ATM, it´s processed immediately (real-time) but it´s probably accounted into the bank general ledgers during the evening or night , in the “batch processes”. A very, very significant part of the business functions are actually processed in batch.

Batch Processes were initially conceived in a period of time where Financial Institutions only operated during branch opening hours and were created for infrastructures, such as mainframes, with costs based on contracted capacity, a capacity that had to be optimized by moving workloads to batch processes running evening and nights time frames, when the branches were cloud.

Nowadays, the banks operate 360 days, 24 hours and 7 days a week through their digital channels: they are aiming to completely automate lots of process activities that were previously manual, for which artificial intelligence capabilities are being introduced into the business processes; business units cry out for real-time information that enables actionable insights; and they are adopting cloud infrastructure as the target infrastructures, where you pay for use and not for contracted capacity and where the time-frame in which it is consumed is not relevant.

With these very substantial changes, it is worth asking, whether batch processes make sense in new generation core systems. Can batch processes play a role in the next generation of banking systems, in the era of 5G, the emergence of artificial intelligence and, for example, the Internet of Things, when even refrigerators are going to communicate in real time with online supermarkets or with the technical service in case of failure?

When facing bore banking transformation programs, are we asking ourselves the right questions? We should not ask ourselves what current batch processes can be transformed into real time, or what is the criteria for such decisions, but we should question whether any business process at all should be developed in batch, and why. And above all, we should discard responses such as “there have always been batch processes” or “it has always been done like this, how am I not going implement the settlement of accounts as a batch process?”.

This article tries to prove that batch processes do not make sense in the new generation of core banking systems. It aims to show that there is no point in looking for technically complex solutions to support batch processes, because there is no reason for these processes themselves: the business requires information and results in real time, 24x7 operation; hyper-modular applications are required, with very low coupling, to achieve great agility, efficiency and maintainability; the new technologies are optimized for real-time processing, with different technical characteristics from that required by batch; and they are deployed on pay-per-use cloud platforms that eliminate the need to distribute the load throughout the day to optimize costs.

What are the batch processes in the core banking systems?

In order to analyze whether batch processes are needed in the next generation of core systems, we first of all should have a clear definition of what we consider to be a batch process, this question being the first point of disagreement in any conversation on the subject. Some definitions or characteristics that define batch processes would be:

- A planned process. Batch processes are executed at a time determined by temporal or dependency conditions. Batch processes run orchestrated by a scheduler with most of the processes simply starting when other finish. The scheduler manages the conditions between the processes based on execution events and execution control rules. Thus, it will launch a process when it receives the event that the previous process it depends on has terminated. There are processes that run specifically at date / time conditions, but they are the dominant case, and they are not exclusive to the batch process, since there are scheduled events in many systems. The interesting feature is that the dependency control is done at the process level: a process begins when another(s) end(s). In a payment processing, for example, it will not be able to process the “credit into account” (step 2 of the process), until all the payments that come in the same batch have been “validated” (step 1 of the process). In contrast, in real-time processes, dependencies are established at individual operations level: it will charge a payment to an account, at the moment that the individual payment has been validated, without waiting for the whole batch to be validated.

- Bulk processes. The second characteristic or definition of a batch process is that it is a massive process, in which batches of operations are processed, generally sequentially. For example, the receipt of a file with direct debit orders triggers the execution of a batch process to process these orders. This is clearly a characteristic of batch processes, although it can also be found in many real-time processes. For example, modern payments processing solutions break down payment order files into individual orders that are processed independently, in real time.

- Batch processes run on “Batch Technical Frameworks”. Batch processes run on a “technical execution platform” designed for batch processing, such as JCL on mainframes, or Spring batch for applications developed in Java.

- Batch processes are those run outside office hours, when the “online” is not active. This is a traditional definition of batch processes, but it is no longer valid. In the past, databases had problems working concurrently with real-time transactions and updates from batch processes. This is a widely overcome problem and currently batch processes run at the same time that the branches are open and, in any case, concurrently with operations from the digital channels (web, mobile…) that operate 24x7. It is still true that batch processes run, as far as possible, outside office hours, to optimize load distribution on the application servers, minimizing their cost.

The definition of batch processes used in the context of this article is a combination of 1 (planned processes) and 2 (batch processing) and 3 (they are usually executed in specialized software for this type of process).

We will define “batch process” as the processing of batches of records or instructions through sequences of activities which are choreographed at the level of the execution process and not of individual record or event, by a scheduler, and in a technological framework specifically developed to support this type of procedure. From this definition it is worth highlighting:

- The process is structured in steps or activities.

- Each step processes full batches of operations or records.

- The control of the process flow is done at the process step level and not a single record or operation level.

Origins of batch processes in the banking core.

The batch processes in the legacy core banking systems originate in an historical period when the Financial Institutions operated only during “branch opening hours” and with infrastructures with limited and expensive resources (our youngest colleagues will no longer remember the multi millions programs to adapt the legacy core banking systems to the arrival of the year 2000, because the “years” in date type fields were stored with two digits ibnstead of four, to save storage). Core systems ran on mainframes or dedicated application servers, the cost of which depended on processing capacity, and that capacity peaked during bank opening hours, but was wasted during the evening / night. Everything that could be delayed and processed in batch, was implemented as batch processes to minimize the processing capacity required during office opening hours.

If we compare this scenario with the current one, we will see a radical change. Nowadays, banks operate 365 days, 24 hours, 7 days a week through their electronic channels and the branch has become one more channel (with entities without branch network, born purely digital).

Traditional infrastructures are replaced by cloud infrastructures, where you pay for the actual use you make of it, and not for a contracted capacity that you may, or not, use.

The business models of financial institutions are changing from product-centric models, with sales strategies to “place” certain products on the market, to customer-centric, with customer service strategies, identifying the best product (s) for their needs and reaching hyper customization of the products for each customer. The Financial Institutions are also aiming to streamline and optimize processes to provide a better customer experience, through the infusion of artificial intelligence in business processes. All these changes in the business model require real-time information (actionable insights) that batch processes cannot provide.

As a result, the scenario that lead to the development of batch process is not valid any more, and brings concerns about the applicability of the batch processing concept itself.

Impact of using batch processes

The immediate consequence of using batch processes is the lack of real-time data to implement real-time insight capabilities. There are many Financial Institutions that, for example, do not have integrated views of customer information (the famous customer 360 view) with information in real time, and with a portion of the data provided in the view coming from the “closing of the previous day” because they are calculated in a batch process. There are hundreds of other examples, but suffice it to say that most of the available management information of the Banks, used to take business decisions is, at the earliest, from the previous day.

The batch processes favor the use of data files in the communications between the different processes, causing a very high level of coupling between the applications and being the fundamental problem when modernizing the core systems. In fact, most banks IT team talks of “the batch”, and not batch of specific domains such as “current account batch”, “credit products batch”, and so on; given the intense coupling between all the processes it´s not possible to differentiate what portions belong to what application.

The new generation core systems aims to exploit cloud infrastructures and new technologies, for which they require a high level of modularity in the applications; this is not very compatible with the high number of strong dependencies generated by batch processes.

A third impact of implementing batch processes are related to the technology themselves. While the traditional platforms such as mainframes were very efficient processing millions of read/writes instructions, and this is what batch processes mostly do, the new technical platforms used for cloud works better with in-memory processing. They are not designed for batch processing and requires specific branch frameworks (i.e Spring batch) or weird uses of other design patterns, such as the “micro batch”, breaking the bulk files into any smaller batches and processing them in parallel so that the overall process does not take too long. All these scenarios transform the easy, streamline batch processing in legacy platforms into complex technical architectures, adding complexity just for the sake of implementing batch processing.

Typology of batch processes and alternative patterns

Perhaps, the best approach to decide whether batch processes make sense in the new generation of core banking systems in the cloud is to identify the typology of current processes and analyze whether they still make sense in the new scenarios, or what are the alternatives:

Interfaces between applications.

There are lots of batch processes that create interface files with other applications, including large informational platforms such as Data-warehouses, Data Pools, etc. Loading batch data between applications means that the data is not available in real time but, generally, the next day, being one of the current limitations of current informational systems. With the new needs to provide actionable insights, applying artificial intelligence on real-time data, these batch integration modes are not valid in new generation banking systems and are replaced by integration patterns in near real-time, generally through streaming of business events.

The interfaces are implemented by sending and receiving data files, creating a high coupling between systems and generating dependencies with downstream systems. Any change in, for example, an account application, which is sending files to downstream systems, such as accounting or reporting applications, impacts these applications and limits their maintainability. It is absolutely a priority in the new generation of systems, to replace these patterns of “push” of data through, with “pull” patterns by subscribing to events, where the applications that need the data, retrieve it from its sources, instead of waiting to receive them.

In summary, the use of batch processes for data exchange between applications is a pattern to be extinguished in the next generation banking systems and, therefore, they are not a source of requirements to justify the existence of batch processes.

Classification, aggregation and administration processes.

This category includes the processes to classify information in databases (for example, classification of credit contracts according to the risk of default), or processes to aggregate information (for example, calculations of business results by branches or customer segment), or processes implementing business functions, such as the calculation of interests or with-holdings for tax obligations.

Similarly to the previous category, these processes generate information that will only be available when the batch process ends and is not available to be used in real-time business decisions. Every day the business requirements to record operations, or to provide management information dashboards, in real time, making the use of batch processes for the implementation of these business functions an invalid solution, and requiring the development of applications that process in near real time, for example through “pipes and filters” type patterns

There are processes, for example the “interest calculation”, which are always controversial, because they are scheduled processes that have always been implemented through batch processes. In traditional core systems, they were developed in batch because traditional mainframe platforms were designed to process this type of process very efficiently and because it had to run after the closing of the business session (this means, during the batch window). The new cloud infrastructures are, however, optimized for real-time processing and in-memory processing and could easily support interest calculation with other non-batch patterns. The calculation of interest is a process that would benefit from the new technologies available in modern cloud platforms such as FaaS (function as a services) or the use of non-relationship databases, creating a solution, more agile, flexible and easier to maintain systems than current batch processes;

Reporting generation processes.

This category of batch processes includes the generation of reports of different types:

- Operational reports. Reports that support the daily operations of branches and departments in the financial institution, generally containing lists of actions to be carried out or operations to be reviewed, such as resolution of incidents in payments, review of credits that have changed their credit rating, evaluation of operations suspected of fraud, etc. At present, this type of report is being replaced by actionable task-lists, notifications, etc., on the channel applications or mobile devices and are generally accompanied by links to the specific functions to resolve the required action. The operational reports are therefore in disuse and do not suppose a requirement that justify the existence of batch processing platforms.

- Regulatory reporting. These are reports for regulatory compliance (communications to central banks, or money laundering prevention units) or internal compliance (for example internal audit reports). Since a long time ago, these reports have been generated from informational systems including data warehouses or data marts and using specific report generation and management software. Therefore, it does not appear that patch processing platforms are required to implement these reporting functions, other than to provision the data from the operational systems but this is another case of “Interfaces between applications” discuss above.

- Management reporting. They are reports provided to the different management levels of the financial institution to determine and adjust business strategies and their execution. Some examples are the reports on the fulfillment of objectives of a client representative, the business results of an branch for a branch manager, the metrics in customer acquisition for an internet banking manager, etc. This is an area where real-time information is increasingly required so that business decisions are made with the most up-to-date information possible, and where traditional reports are replaced by dashboards that are fed by information in near real time, streaming business events that are captured as they occur. Like the previous types of reports, they do not seem to be candidates to justify the existence of batch processes in new generation banking systems.

- Financial transaction processing. This category of batch processes send, receive and process payments, checks, trade finance operations and any other type of financial transactions involving several financial institutions and clearing houses. The most characteristic example are the payment instructions, including direct debits or credit transfers. These processes include not only the exchange of orders, but the subsequent clearing and settlement processes. The evolution of financial transactions to real-time models (for example, immediate payments) makes batch processing, in batch process, not suitable for these use cases. On the other hand, the use of batch processing has historically been due to the fact that traditional platforms, such as mainframes, were designed to perform this treatment in an optimal way, but this does not happen in new cloud infrastructures. These reasons explain why payment and financial transactions solutions are increasingly applying real time patterns, for example implementing “pipes and filters”, even in cases where the exchange vehicles themselves are bulk data files, decomposing the files into individual instructions which are processed individually

Conclusions

The entities immersed in the development of their new generation core banking systems must seriously reconsider whether the should provide batch processing capabilities to the new platform or, on the contrary, it´s not necessary or it is even a bad solution.

The banking scenario that gave rise to batch processes has radically changed: banks operate 24x7 and not only during office hours, the business requires real-time information for decision-making and to make use of automation technology and artificial intelligence; The technology available for the new systems has evolved towards models in the cloud with pay-per-use and not by capacity; these are technologies not optimized for batch processing, where implementing batch processes requires an additional technical complexity.

With this change of scenery, there seems to be no reason why the new generation systems have batch components and everything points to the convenience of changing the strategy and evolving towards alternative design patterns of real-time or near-real-time processing.